Difficulty is a lie.

Every game designer knows this. You spend months tuning your combat system, calibrating enemy health, testing damage values. You think you have it right. Then real players get their hands on it, and everything falls apart. Some players breeze through your “hard” section. Others hit a wall on something you thought was trivial. Difficulty isn’t a property of your game. It’s a relationship between your game and each specific player.

That insight sounds simple. Building a system that actually acts on it in real time, for groups of players with different skill levels, different character builds, and different configurations, is one of the harder engineering problems I’ve worked on. And the way we solved it turned out to be a direct encounter with what I now recognize as the composite AI problem.

The setup

The project was a co-op PvE game with an AI Game Master at its core. The Game Master’s job was to evaluate the current state of the players — their skills, their characters, their team composition — and adjust the challenge accordingly. Not once at the start of a session. Continuously, in real time, across a match involving one to four players whose configurations could vary enormously.

The design intent was clear: every group, regardless of composition, should feel a consistent level of challenge. Not easy. Not brutal. Calibrated. The kind of difficulty that keeps players in flow.

Two sources of truth that don’t speak the same language

The first approach was rule-based. Designers would define explicit parameters: this encounter should feel hard, this one moderate, this mechanic is punishing for low-level characters. We encoded those intentions as constraints. The system was controllable, transparent, and completely disconnected from reality.

What designers think is hard and what players actually experience as hard are two different things. The rules captured intent, not outcome. The moment real player behavior entered the picture, the rule system started lying to us.

So we moved toward a neural network. The idea was to learn difficulty from actual play data: what combinations of player profiles, character builds, and encounter parameters produced the outcomes we wanted. Let the data speak.

The problem was convergence. To train the model meaningfully, we needed approximately 3,000 matches per adjustment cycle. In a production environment, that is an enormous amount of data to collect before you can act. Every time we touched the gameplay — rebalanced a mechanic, adjusted an enemy, changed a character ability — we were effectively resetting the learning process. The model needed to relearn what it had just understood.

We were stuck between two broken systems. The rule-based approach was fast but wrong. The neural approach was right but slow.

The hybrid that actually worked

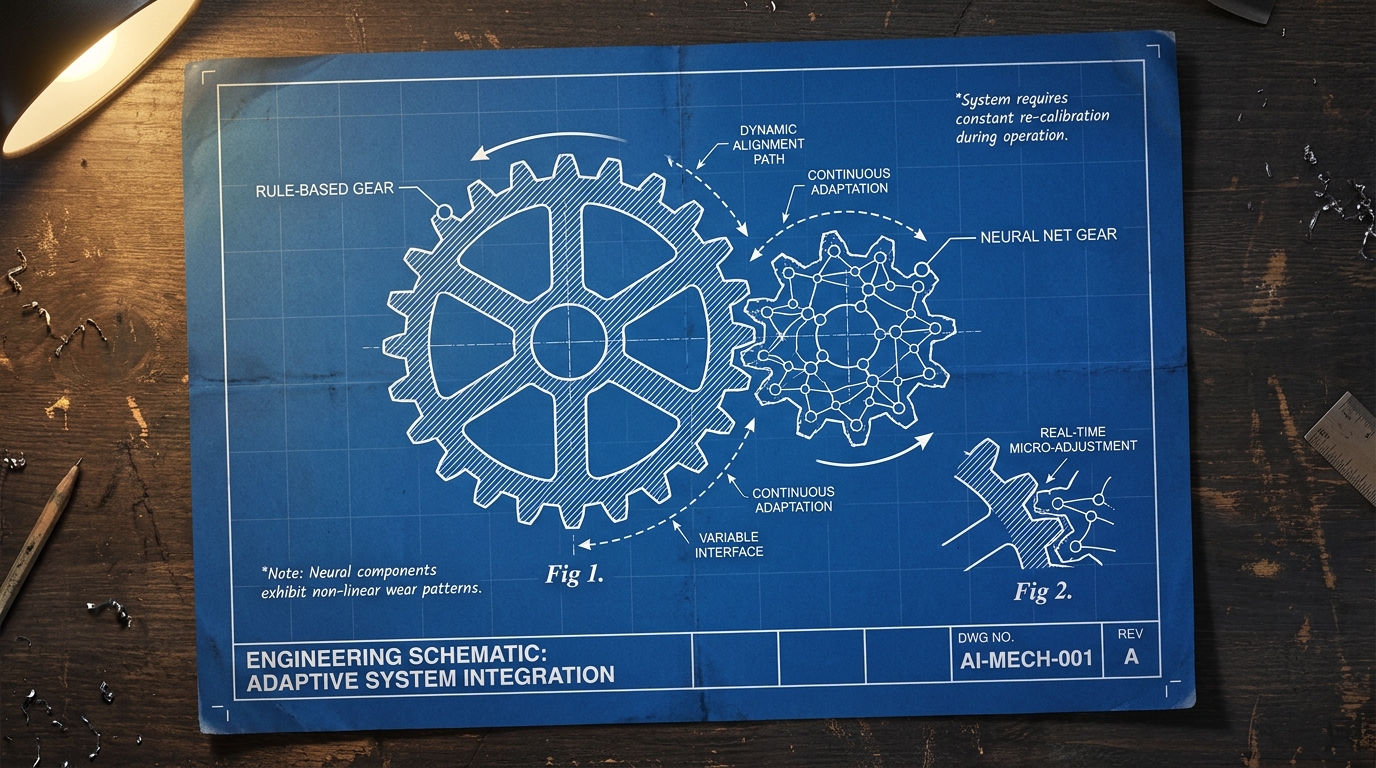

The solution was to make them cooperate, not compete.

We kept the neural network as the primary system for evaluating real difficulty. But we introduced the designer evaluations as a structured prior: a top-down signal that gave the model a starting point before sufficient play data was available. When a new mechanic was introduced, the system didn’t start from zero. It started from what designers believed about that mechanic, then updated as real data came in.

This accelerated convergence significantly. The model no longer needed thousands of matches to develop a baseline. It inherited one, then refined it. The rule-based layer wasn’t a fallback. It was a scaffold.

What made this work wasn’t the technical implementation. It was accepting that the two systems were answering different questions. The rule-based layer encoded design intent: what we wanted the experience to be. The neural layer encoded empirical reality: what the experience actually was. Neither was complete on its own. Together, they produced something neither could achieve alone.

What this has to do with world models

I didn’t think of this as a contribution to AI research at the time. It was a production problem. We needed the game to ship, and this was the architecture that made it work.

But looking back, the structure of the problem is not specific to games. Any system that has to operate intelligently in a real environment faces the same tension: explicit knowledge from domain experts that is fast, controllable, and incomplete, versus learned representations that are accurate but data-hungry and opaque. Making those two things cooperate without losing the controllability of the first or the accuracy of the second is, in different language, exactly what research on hybrid AI architectures is working on today.

The game industry has been running these experiments in production for decades. Not in research labs, not on benchmark datasets, but on live systems with real users generating real-time feedback at scale. The problems we solved weren’t always solved elegantly, and the solutions weren’t always principled. But they were real.

World models need to be controllable. They need to handle situations where training data is sparse. They need to integrate structured human knowledge with learned representations. They need to do all of this in real time, under operational constraints, with no tolerance for cold-start failures.

If that sounds familiar, it should. We’ve been building versions of this problem for a long time. We just called it game design.